After much blood, sweat, tears and other bodily fluids, I’ve finally managed to reduce boot times on my computer. Although I’ve most definitely surpassed the time savings with time wasted getting it working, it was still a great learning experience. To avoid the rather complicated and frustrating process it was to get this working, I’ll instead explain from start to finish what needs to be done, including workarounds for some of the less functional tasks.

Quick overview

EDIT: Due to the recent re-addition of the e4rat-lite package to the AUR, some of this process has been simplified, and adjustments have been made accordingly.

I want to start off by pointing out that the method used to accomplish this is not for the faint of heart, and some of the techniques and workarounds used take more advanced Linux and programming concepts to succeed. The method being applied uses a package called e4rat-lite to optimize file placement on HDDs to improve loading times. This method will (most likely) have no impact, if not worse performance for SSDs. Note also that the explanation given is for usage on Arch Linux, and that the process may vary (or be less complicated) on other systems

The process to getting this working goes as follows:

- Compile custom Linux kernel with auditing support

- Build and install Audit, with custom options

Edit, build andinstall e4rat-lite- (optional) configure boot benchmarks

- Run e4rat-lite tools

At the end, I’ll also display some graphs to show the improvements this had made to my system.

Building custom kernel

Update: As of kernel version 4.18, this is enabled by default, and does not require manually building the kernel. Whoho!

e4rat uses the audit package to determine what files are being used during boot. As the kernel does not have audit enabled by default, a custom kernel must be used instead. In theory a pre-built kernel can be used for this purpose, such as the linux-hardened package (installed along with linux-hardened-headers). Alternatively, one can build a custom kernel with the necessary build flags.

To build the kernel, the packages asp and ones from the base-devel are required (notably GCC). To begin the customization of the kernel, the following can be done:

$ cd ~/ $ mkdir build $ cd build/ #Note any directory can be used for this$ ASPROOT=. asp checkout linux

Once this is completed, move into the linux/repos/core-x86_64 directory, where changes can be made to the package. An optional, though recommended modification in the PKGBUILD file is to modify the pkgbase option. This will allow the new kernel to be installed without having to replace the current on in place. Note that, once the modified kernel is used, it will no longer be needed. I replaced the name of the package (linux) with linux-audit.

Next, a couple modifications must be done to the config (previously config.x86_64):

CONFIG_AUDIT=y #this line is modified

CONFIG_AUDITSYSCALL=y #this line is added

After this the checksums must be updated with this command:

$ updpkgsums

This may take some time, as this will download the full Linux package. Upon completion, the building process can begin. Note that this will take some time (30 minutes in my case), and will depend on your CPU speed as well as the number of CPU threads being utilized. See my Arch Setup page for information for configuring this.

$ makepkg -s

Note: this command may require being executed with the addition of --skippgpcheck to avoid errors due to failed checks. While the kernel is compiling, some additional flags and options may be queried to be added. Simply choose y (or m) for all of these.

Once this is completed, the new packages can be installed. It is recommended to first install the headers before the kernel package.

$ pacman -U linux-audit-headers.tar.zx

$ pacman -U linux-audit.tar.zx

Please note, the package names will vary with Linux kernel versions. Upon completion, the new kernel can be added to the boot loader (grub customizer can be used for this).

Installing Audit

While normally audit would be a simple task to install, there’s a small change that needs to be made to the package build to correct an incompatibility with e4rat. Instead of automatic installation, a manual one must be done, for example:

$ yaourt -Sb audit

Yaourt has the -b option to build the package manually. When prompted to edit the PKGBUILD, the following modification must be made:

options=(’emptydirs’ ‘staticlibs’)

With this the installation should finish without issue.

Installing e4rat-lite

Thanks to the resurgence of the lite version of the package, this step is now quite simple. Just install the e4rat-lite-git package from the AUR, once the audit package is installed. That’s it. All done. Nothing more.

… hooray!

(Optional) Packages for performance testing

The Arch Wiki recommends using Bootchart to measure the performance of the system while booting, and to generate nice graphs to demonstrate. While I could not successfully generate charts with the original Bootchart, Bootchart2 (also listed) worked, with a minor modification. Simply installing it, and adding a kernel parameter to boot allows it to run, though with a small change to what the wiki currently illustrates:

init=/sbin/bootchartd2

After this, the pybootchartgui -i command will generate an interactive graph.

Note that, in order to use bootchartd2 with e4rat-lite-preload, the init line in the /sbin/bootchartd2 file must be modified:

init=/usr/bin/e4rat-lite-preload

Running the e4rat tools

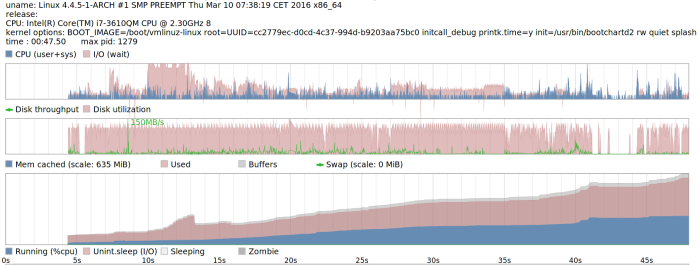

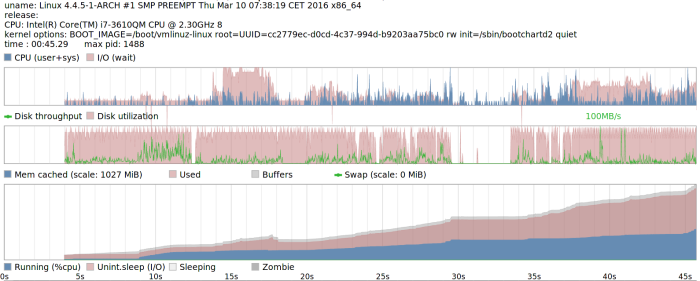

Now that all the prep-work is done, we can actually get to the performance improvements. I’m going to start off with a screenshot of my initial benchmark.

Note that, while the total runs past 45 seconds, the analysis terminates (in my case) once mutter (gnome shell) starts up. The drop in the CPU and disk usage is when I get to login. So I can say it takes about 40 seconds to get from boot menu to login screen, in my case.

To start the analysis, the first thing to do is to add a different init script to the kernel parameters, which the Arch Wiki has a detailed guide on accomplishing. Add the following:

init=/usr/bin/e4rat-lite-collect

Note: the argument audit=1 had been previously included here, but some users have indicated issues (segfaults, etc.) when included. This will enable auditing (in case it isn’t) and collect data about the files being opened during the first 120 seconds once the boot process begins. This timer is default, and can be changed via /etc/e4rat-lite.conf. Assuming it all worked successfully, a log will be generated in /var/lib/e4rat-lite/startup.log filled with everything that was audited. Once this is done, optimizations can be applied.

The first optimization is on the hard drive. The tool included will move files around on the disk, and place them in a more efficient way towards the exterior of the disk to make better use of read patterns and speeds. Note that it is recommended (though not required) to enter in recovery mode (systemctl isolate resuce) to run this, as less files will be used and un-movable. Run the following command the terminal:

$ e4rat-lite-realloc

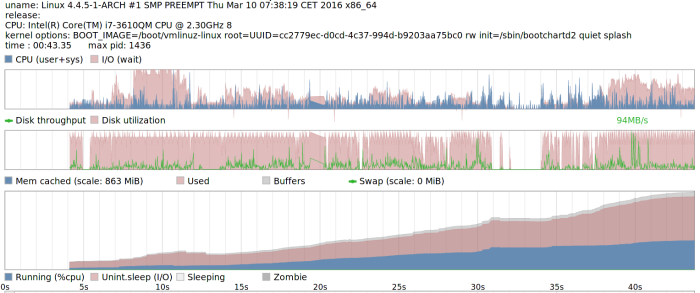

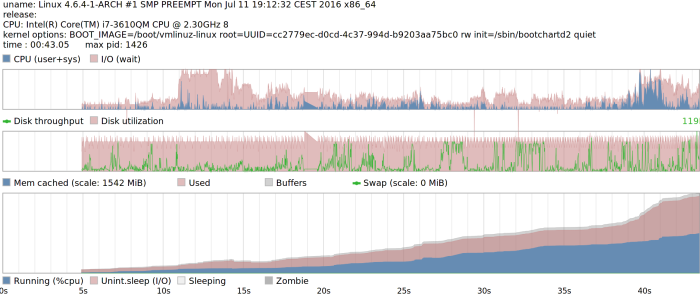

The process shouldn’t take very long. It is recommended to run the command a few times to assure that all files possible are moved. Note that, as far as I could find, no portion of the Linux kernel is directly included in this, meaning that performance should not change when choosing a different kernel, or upgrading one. Once this was completed, I ran another benchmark to plot the immediate difference.

In case it isn’t completely obvious, my boot time (to login) was reduced to just over 30 seconds, meaning an approximate 10 second improvement. This however is not the last component of the e4rat set of tools. The last one is intended (from what I understand) to cache files early on for later usage, to avoid delays. To accomplish this, a (permanent) init script is used:

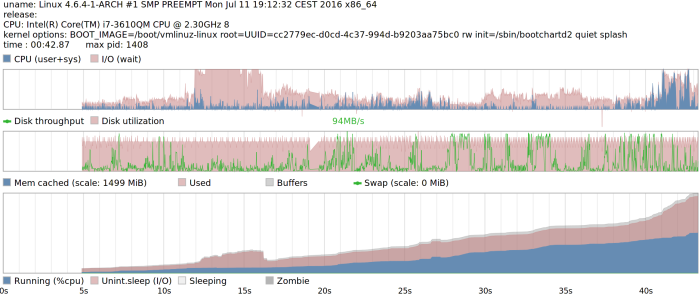

init=/usr/bin/e4rat-lite-preload

One thing to note is that this script, unlike the previous, and contrary to some forum posts, does not require audit, so it can be used on any kernel installation without the need to compile a custom kernel for each usage. The following are my results with it:

What makes it clear that the preload is working is that, unlike the previous charts, there is no dip in this one. The preload is caching files the whole time, so it’s actually quite difficult to tell when the login screen appears. I estimate it to be around the 25 to 30 second point. I didn’t feel I noticed a significant difference leading up to the login screen. What I did notice however, was the difference made once logging in. As I have several applications on startup, they were open almost immediately after login, since the files were pre-loaded.

I also compared this tool with a different one called systemd-readahead, which accomplishes a similar task as the pre-load. Not only did it make no notable difference, in a few runs in actually increased boot times than ones without it. I’m not sure if I perhaps mis-configured it (it’s easy to setup), or I did not do enough boots for it to be optimal (I tried about 4 times). Regardless, this tool made no impact on booting.

Plymouth

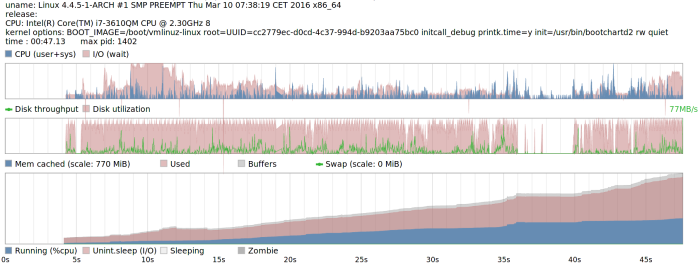

One thing I had considered would make a difference in boot times is plymouth, as it just adds a splash screen while booting, which no doubt adds some overhead. I took the liberty of comparing boot times without it enabled, for comparison. First, before the disk optimization:

Note that, in this case, it did reduce my boot times by about 5 seconds.Next is the run after the optimization, again without plymouth enabled:

This can go to show the difference the disk optimization made. Here, there’s only approximately 1 second of difference, so it’s likely there was some bottleneck loading plymouth that lead to the increased delay.

Finally, the preload without plymouth:

Honestly, I see no difference here as with plymouth enabled. Needless to say, there isn’t enough of a significant difference to boot without it. That, and I like the splash screen.

Conclusion

While a 10 second boot time reduction may be more significant to some more than others, I don’t find it overly noteworthy the time it takes to get to the login prompt (GDM in my case). This is particularly emphasized by the 30 seconds it still takes to login. What I do notice however is the responsiveness once I get to the prompt, and after logging in. Normally, there is a delay between when I see the login screen, and when I can actually enter my password. Now, I find this practically non-existent. I can’t really boast about time savings, considering the effort put into getting it working definitely outweighs this, but it was an interesting learning experience. While there is definitely room for improvement for optimization of boot times (particularly off hard disks), there doesn’t seem to be much interest for developers to tackle this, what with the rising popularity of SSDs. Either way, it’s interesting to try and see what can make a difference, even if small.

Hi

A very cool testing process. Many thanks for taking the time to share it with details.

I’d love to run e4rat tools on my favorite monster (a 2010 Atom 5400 rpm hdd splendid laptop) that boots in 20 / 35 sec to cli / gui.

Have compiled the Arch stock kernel with AUDIT=y, audit with “options (‘staticlibs’)”, e4rat and added the mandatory bootloaderentry. But e4rat collects nothing.I’d love to find out why.

% uname -rms

Linux 4.6.3-1-audit i686

% zgrep -i audit /proc/config.gz

CONFIG_LOCALVERSION=”-audit”

CONFIG_AUDIT=y

CONFIG_HAVE_ARCH_AUDITSYSCALL=y

CONFIG_AUDITSYSCALL=y

CONFIG_AUDIT_WATCH=y

CONFIG_AUDIT_TREE=y

# CONFIG_NETFILTER_XT_TARGET_AUDIT is not set

CONFIG_INTEGRITY_AUDIT=y

CONFIG_AUDIT_GENERIC=y

# CONFIG_AUDIT_ARCH_COMPAT_GENERIC is not set

% sudo journalctl -xb|grep e4rat

juil. 11 23:24:49 gwenael kernel: Kernel command line: BOOT_IMAGE=../vmlinuz-linux-audit root=/dev/mapper/vg1-sys1b rw resume=UUID=b43bc921-65cb-4b55-9f7d-34b06d5dac14 audit=1 init=/sbin/e4rat-collect zswap.enabled=1 zswap.max_pool_percent=25 zswap.compressor=lz4 systemd.restore_state=0 initrd=../initramfs-linux-audit.img

juil. 11 23:26:31 gwenael audit: EXECVE argc=9 a0=”ls” a1=”-p” a2=”–color=auto” a3=”–group-directories-first” a4=”-l” a5=”-G” a6=”-h” a7=”-p” a8=”/var/lib/e4rat/”

juil. 11 23:26:31 gwenael audit: PATH item=0 name=”/var/lib/e4rat/” inode=428533 dev=fe:01 mode=040755 ouid=0 ogid=0 rdev=00:00 nametype=NORMAL

% ll /var/lib/e4rat/ (Over 5 minutes upon boot finished)

total 0

Do you believe the fact that I compiled a custom kernel with only the modules I’ve used over the last 6 months –or some other reason I can’t see– explains this emptyness ?

LikeLike

I’m glad to see someone else attempting what I’ve tried.

As far as I can tell the issue is not likely the custom kernel parameters, as they seem to be configured correctly. What I think the issue is (based on the output you’ve provided) is that no files exist in /var/lib/e4rat/. With my experience, the startup.log file needed to be manually created (sudo touch /var/lib/e4rat/startup.log) in order for it to be written to. See if this helps.

LikeLiked by 1 person

Hi! Glad you saw my comment.

No worries I saw your note about touch e4rat log file manually and did that but it stayed empty. Thereafter I deleted it back and rebooted with the unlucky result above.

Also, I recompiled Arch stock kernel with its modules and tried again, but no more luck –yet. I’ll get again and maybe I’ll stumb upon a little something I’m missing atm. Meanwhile if an idea flies up trough your mind please share it.

LikeLike

I’m wondering if it’s an issue with e4rat writing the output; perhaps the workaround for recompiling it doesn’t work anymore. Do you know if e4rat is attempted to be run at all? Perhaps modify the launch script for it to create a temporary file somewhere?

LikeLiked by 1 person

Yes, I also put e4rat writing the output on the top possible issues. My reason is I can can perfectly see e4rat-collect starting when boot up begins. Also if I change e4rat log level to 31 it prints them for quite a while during startup. After what the output file is… empty.

– auditd was disabled (default); I rebooted after enabling it.

– my / partition is on LVM but not /boot. I tried after setting the output path to /boot/e4rat_startup.log

No change –yet.

Starting with the default Arch kernel (with AUDIT=y) journalctl gave two different outputs regarding e4rat, none that I can read.

Unless you’re ok for me to go on polluting your article comments I’ll go share on the Arch forum.

LikeLike

Sure go ahead. Send a link to your post here as well. It hadn’t occurred to me that it could be an LVM issue, but I suppose that’s possible.

LikeLike

Yeah thanks, and done.

Note: I followed up under your initial post there to help focusing the tiny bit of attention there’s for e4rat.

LikeLike

Got e4rat-lite-{collect,realloc,preload} installed and running good, finally. “Terminator”-Dell mini now loads wi-fi, browser, word processing, loaaaads of emails, file manager and decrypt my vault in (much) less than 60 sec from cold boot. Houba hop !

@cubethethird your paper was the initiator of this welcomed learning session. Now if you go back optimizing yours –which ain’t that low pro and boots slower :p– you may try to load your session a bit: I’m certain you’ll appreciate that ‘preload’ powaa!

LikeLike

[…] original post has been updated with the new commands and […]

LikeLike

Thanks for the guide. but it doesn’t create startup.log. I tried editing config files, changed log directories and created empty log files but it doesn’t output log file. I am struggling for months to get this work but it doesn’t 😦

LikeLike

Are you using the e4rat-lite package, instead of the original e4rat? The original one gave me issues with the startup file and I had to originally manually edit and re-build the package. After re-uploading e4rat-lite to the AUR I removed those steps from this post since the issue was no longer present with it.

LikeLike

I am using e4rat-lite. I get segmentation fault during boot

LikeLike

Hi, i tried to do as the guide but when boot it says Segmentation fault backtrace() 9 address. I’m using e4rat-lite and did build a custom kernel with audit.

LikeLike

To be sure, does the custom kernel boot without using any of the e4rat-lite tools? Also can I assume that the segfault occurs during the collect phase?

LikeLike

Booting custom kernel with audit=1 init=/usr/sbin/e4rat-lite-collect

e4rat-lite-collect also segfaulting in my case.

LikeLike

removing audit=1 did the trick in my case

LikeLike

Hmm interesting. To be clear, when removing the audit parameter, the startup.log file was generated successfully?

LikeLike

Yes, after given period it was created. No startup.log after 180 secs (in my case) was created before removing audit=1. (Don’t think process can do anything useful after seg fault :-))

LikeLike

Thanks. That’s great to know. I’ll adjust the instructions accordingly.

LikeLike

Everything works after removing audit=1. thanks Huz.

LikeLike

Thanks for this handy write-up!

Something I discovered while doing this today is that instead of compiling your own kernel, you can just install linux-hardened, since it has CONFIG_AUDIT enabled by default. (I also installed linux-hardened-headers, though I don’t know if that was necessary). Then just point your bootloader to use that kernel while running collect and realloc. It makes the whole process much simpler and faster.

LikeLike

Thanks for the info. I’ll add it to my post. I would suspect that the headers would be necessary, as this would recreate the typical use case for when the system is running normally, thus collecting everything during boot time.

LikeLike

Interesting article, thank you for sharing!

I have to ask – do you boot from a mechanical drive? 40 seconds, and even 30 sounds like very long time to boot from an SSD drive. My system start in about 4 seconds (of off a M.2 drive (Samsung 950 Pro). However, my UEFI bios (Gigabyte Gaming 7 motherboard) takes about 30 – 40 seconds to handle over control to Linux loader :-(.

LikeLike

Glad you found it interesting. As I said at the beginning, this method isn’t useful for SSDs, so I figured it would be fairly obvious that I’m booting from an HDD, hence the longer boot times. That being said, I’ve made some changes (which at some point I should post here) for ongoing optimization that has actually reduced the average boot time to ~20 seconds.

At the time, I was using an older laptop, which only has an HDD with no space for additional storage. I have a newer desktop which has both, and naturally does boot more quickly.

I’m surprised your BIOS takes that long to get you to the Linux loader. Perhaps check for options for quick boot? For me, cold boot takes only about 10 seconds.

LikeLiked by 1 person

Oh, I have missed it that you mentioned it’s not for SSDs :-). Sorry about that. Yeah, this mobo is really slow when it comes to booting. I have posted on reddit in some Linux forum and some other person confirmed. Otherwise it’s rock stable overclocked to 4.6 Ghz i7, two M.2 slots, 4 sata slots (unfortunately shared bus, but I’m using just two anyway). I chossed it because it was supposed to work well with Linux. The only bed thing is slow boot time and the fact that I couldn’t manage to get sound blaster’s core sound to work. It’s supposed to be possible, but I can’t get sound from it. But Gtx has sound card on it’s for hdmi purpose so i get sound that way. But I am always on a look for a better replacement, so if you have suggestion I am all ears.

LikeLike

If you’re looking for just a mobo replacement there isn’t much I can recommend, since I went for an AMD Ryzen build. If you’re in a pinch and need alternative sound options though, you could get a cheap sound card.

LikeLiked by 1 person

I am not looking actively. I put together this machine which was quite beefy around in may 2016, so I expect it to last at least 2 more years, but I remember how hard was to find a motherboard that will have high spec enough and yet play well with Linux in regard with drivers. I am also now aware that boot time is kind-of a factor too, so I am from to time to time looking around for mobos reviews and discussions.

Anyway, another thing to try to squeeze a tiny bit of boot time is to run kernel directly from uefi bios loader. Also to skip traditional loaders (grub, lilo & co). I boot with systemd-boot (a.ka. gumiboot), but with uefi bios, that is really superfluous. I even pres F12 for uef boot manager and skip systemd-boot altogether when I want to boot into windows. However I wasn’t being able at the time to configure kernel to be loaded by uefi when I was installing my Arch. I followed several tutorials, Arch wiki and so on, but I didn’t got it at them time, since then I haven’t look into it. I don’t think that would shave off many seconds from boot time, but a couple problably. And that would remove one software component out of dependency tree and configuration work and so on :-). I also am not sure if and how kernels parameters can be passed from uefi either. So maybe uefi boot can’t be used because of that. I don’t want to sit and type kernel parameters in some obscure shell every time I start my computer either.

Yet another thing to shave off boot time is to skip login manager altogether. I am not even using desktop enviroment, I am just using a window manager (compiz in my case), configured fo speed and lightness on resource. I know I can use i3 and bunch of other even more lightweight, but a little big of eyecandy is not so bad. Since you have laptop, you can configure your system to ask you for either a user and password before you are logged into graphical boot, and than let xinit do it’s job automatically and boot you into window manager, or you can have your system to log in your user automatically and just ask you for the password. Since this computer is staionary and always at home and I don’t have small children and such, I have configured mine to boot my username directly. That is tremendeous difference in time compared to using any kind of login screen even xdm or lightdm. Kdm and gdm are horrible as well as entire kde and gnome desktops in regard to startup time. I don’t say they are bad, it looks nice and is quite polished, but I really live in 3 applications: Firefox, Emacs and Terminal :-). I don’t need all the blips and blops around on my screen. It’s just me of course feel to ignore, but play around and see how it changes your startup times.

LikeLike